AI is no longer experimental. It is actively driving decisions across industries. From healthcare diagnostics to logistics optimization, AI systems are solving real problems at scale.

But here’s what most businesses overlook.

AI development itself is not a single system. It is built on agents. And not all agents think, act, or perform in the same way.

Some agents react instantly. Some analyze context. Others plan ahead or learn continuously. Each type is designed for a specific kind of problem.

Choosing the wrong agent architecture does more than reduce performance. It leads to wasted investment, poor scalability, and unreliable outcomes.

This guide breaks down the types of agents in AI, how they work, where they fit, and how to choose the right one for your use case.

What is an Agent in AI?

An AI agent is a system that performs three core functions:

- It perceives its environment using data inputs

- It processes that data to make decisions

- It acts to achieve a defined goal

This simple loop powers everything from recommendation engines to autonomous systems.

For businesses, this matters more than the algorithm itself. The types of intelligent agents determine how your system behaves under pressure, adapts to change, and scales over time.

Types of AI Agents Explained

Let’s break down each agent type with a plain-English explanation, a real-world analogy, and an example you’ll recognize.

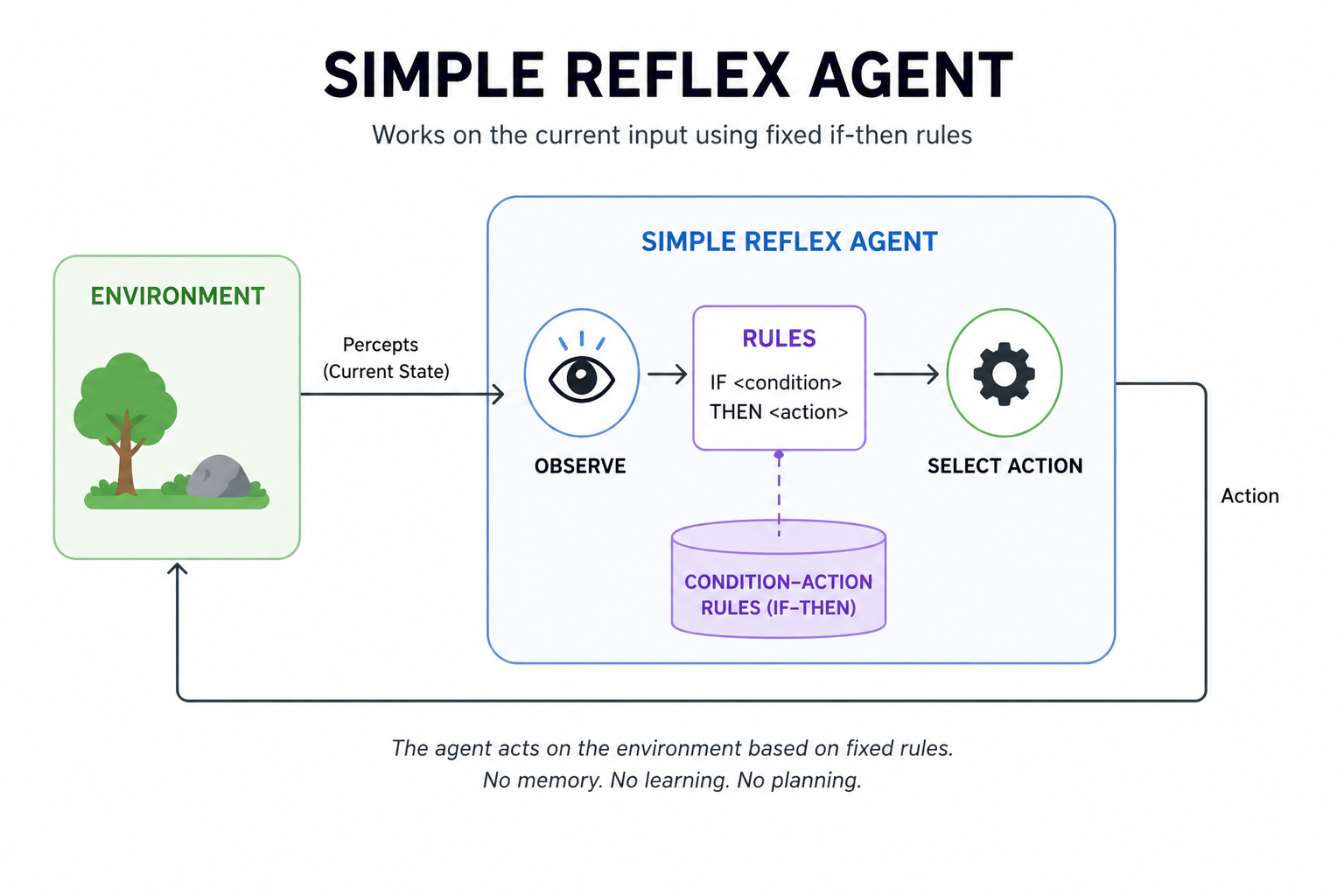

1. Simple Reflex Agent

Simple reflex agents are the most basic type of AI agents. They follow fixed if-then rules. They observe the current state and trigger an action immediately.

There is no memory of what happened before. No learning. No planning. They work best in stable, fully observable environments with predefined rules.

Key Characteristics

- Reactive: These agents respond immediately to inputs. They do not consider past events or predict future outcomes.

- Limited Scope: They work well in predictable environments. Tasks must be simple, and outcomes must follow clear, fixed rules.

- Quick Response: Decisions are based only on the current input. This allows the agent to act without any delay.

- No Learning: These agents cannot improve over time. Their behavior stays the same no matter how many times they act.

Example of a Simple Reflex Agent

Basic email spam filters that classify emails as spam or not based on predefined rules like keywords and sender patterns.

Strengths

Delivers instant responses using fixed rules, making it fast, simple to build, and cost-efficient for predictable tasks.

Best for

Simple, predictable environments with clear rules.

Limitation

Fails the moment the situation is unclear or unpredictable.

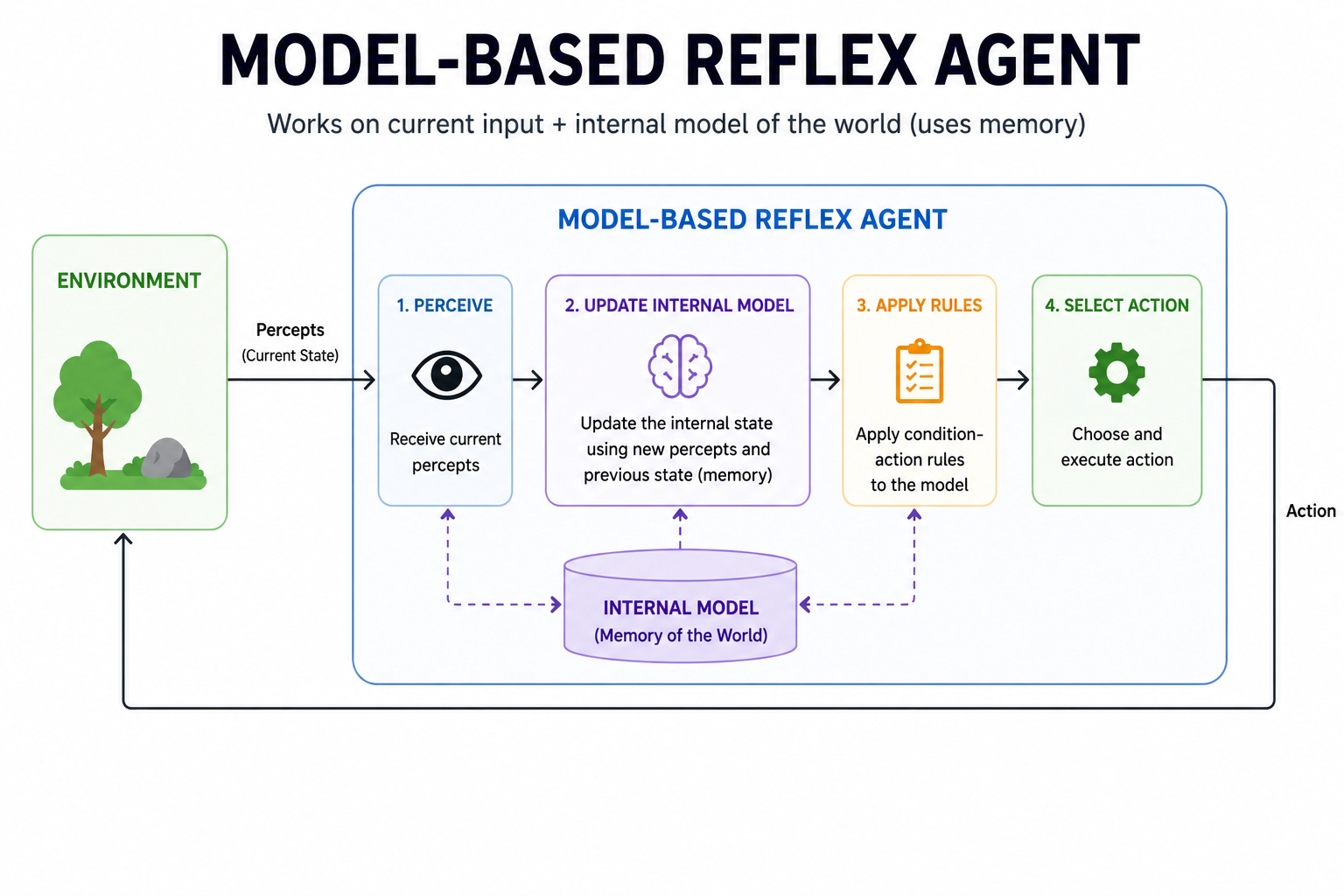

2. Model-Based Reflex Agent

A model-based reflex agent improves on simple reflex systems by adding context. They maintain an internal model of the environment, allowing them to track changes and handle partial information.

This helps them handle partial observability better. Decisions are still reactive but more informed. They update their internal state as new information arrives. They work well in dynamic settings.

Key Characteristics

- Internal State: These agents maintain a model of the world. This helps them make decisions even when some information is not directly visible.

- Adaptive: They update their internal model as new information comes in. This allows them to adjust to changes in the environment.

- Better Decision-Making: Using the internal model leads to more informed choices. This reduces the chance of taking a wrong action.

- Increased Complexity: Maintaining the internal model requires more memory and computing power than a simple reflex agent.

Example of Model-Based Reflex Agent

Robotic arms in manufacturing that adjust movements based on real-time sensor data and stored information about previous positions.

Strengths

Uses internal memory to handle incomplete data, enabling more accurate decisions and better adaptability in changing environments.

Best for

Partially observable environments where you cannot see everything at once.

Limitation

If the internal model is outdated or flawed, so will the decisions.

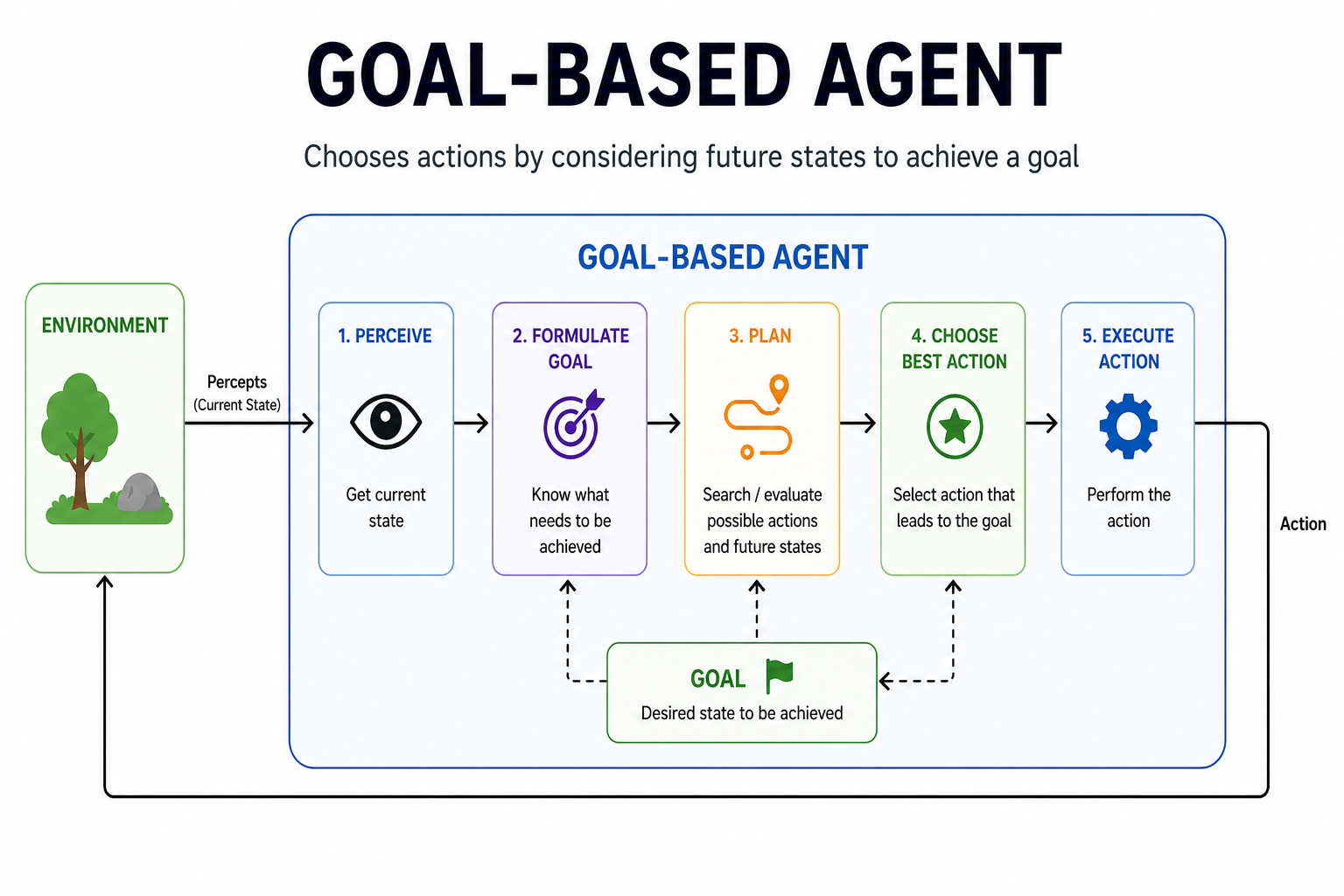

3. Goal-Based Agent

Goal-based agents select actions by thinking about future outcomes. They have a clear goal and work toward it step by step. Unlike reflex agents, they do not just react. They plan.

They explore many possible action sequences before choosing one. These are the core problem solving agents in artificial intelligence. They need well-defined goals and strong planning algorithms to perform well.

Key Characteristics

- Goal-Oriented: Every decision is tied to a goal. The agent acts only in ways that bring it closer to achieving that goal.

- Planning and Search: It explores multiple action paths before making a decision. This helps it find the most effective sequence of steps.

- Flexible: If conditions change mid-task, the agent can replan. It adjusts its strategy to stay on track toward its goal.

- Future-Oriented: Unlike reflex agents, it looks ahead. It predicts what will happen next before taking any action.

Example of Goal-Based Reflex Agent

Chess-playing AI that evaluates possible moves and selects the one that increases its chances of winning the game.

Strengths

Plans actions toward a defined goal, allowing flexible decision-making and the ability to adapt or re-plan when conditions change.

Best for

Tasks that require planning and multi-step decisions.

Limitation

Struggles when goals are poorly defined or when the number of possible paths becomes too large to search efficiently.

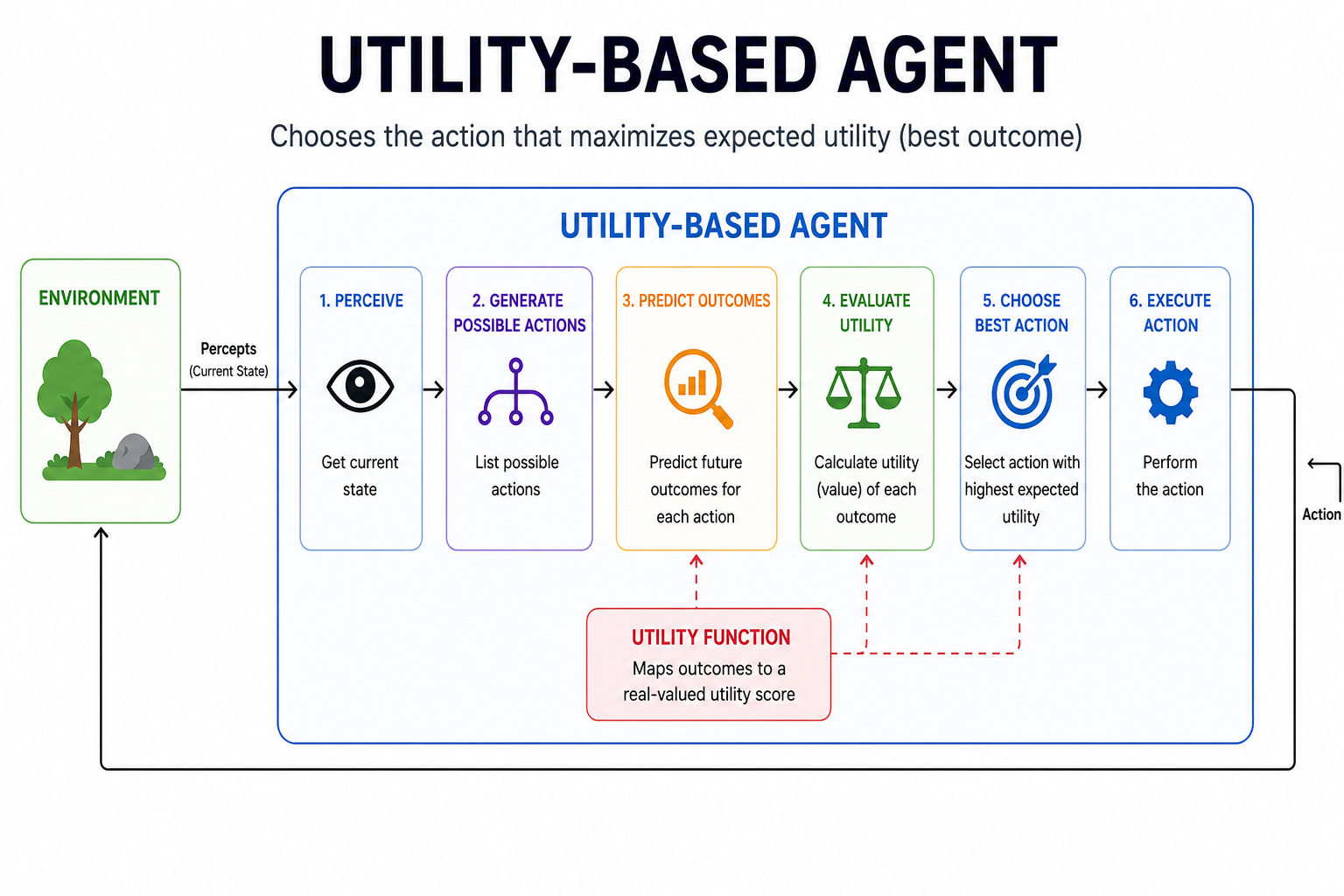

4. Utility-Based Agent

Utility-based agents go beyond just reaching a goal. They evaluate how good each possible outcome is. They use a utility function to score different options and pick the one with the highest value.

This makes utility-based agents great at handling trade-offs. They are one of the different types of agents that can deal with uncertainty. The quality of the utility function determines how well it performs.

Key Characteristics

- Multi-Criteria Decision Making: These agents simultaneously weigh multiple factors. Cost, risk, time, and benefit are all considered before taking action.

- Trade-Offs: They are built to balance competing goals. They find the best compromise when two or more objectives conflict.

- Customizable: The utility function can be adjusted to reflect different preferences. This makes them flexible across many industries and use cases.

- Increased Complexity: Designing a good utility function is hard. Getting the scoring right requires careful thinking and testing.

Example of Utility-based Agent

Netflix recommendation engines that suggest content based on user preferences, watch history, and predicted satisfaction.

Strengths

Evaluates multiple outcomes to choose the best option, helping optimize decisions while balancing risk, cost, and benefits.

Best for

Situations with multiple competing goals or uncertain outcomes.

Limitation

Designing an accurate utility function is hard. A poorly built one leads to subtle errors at scale.

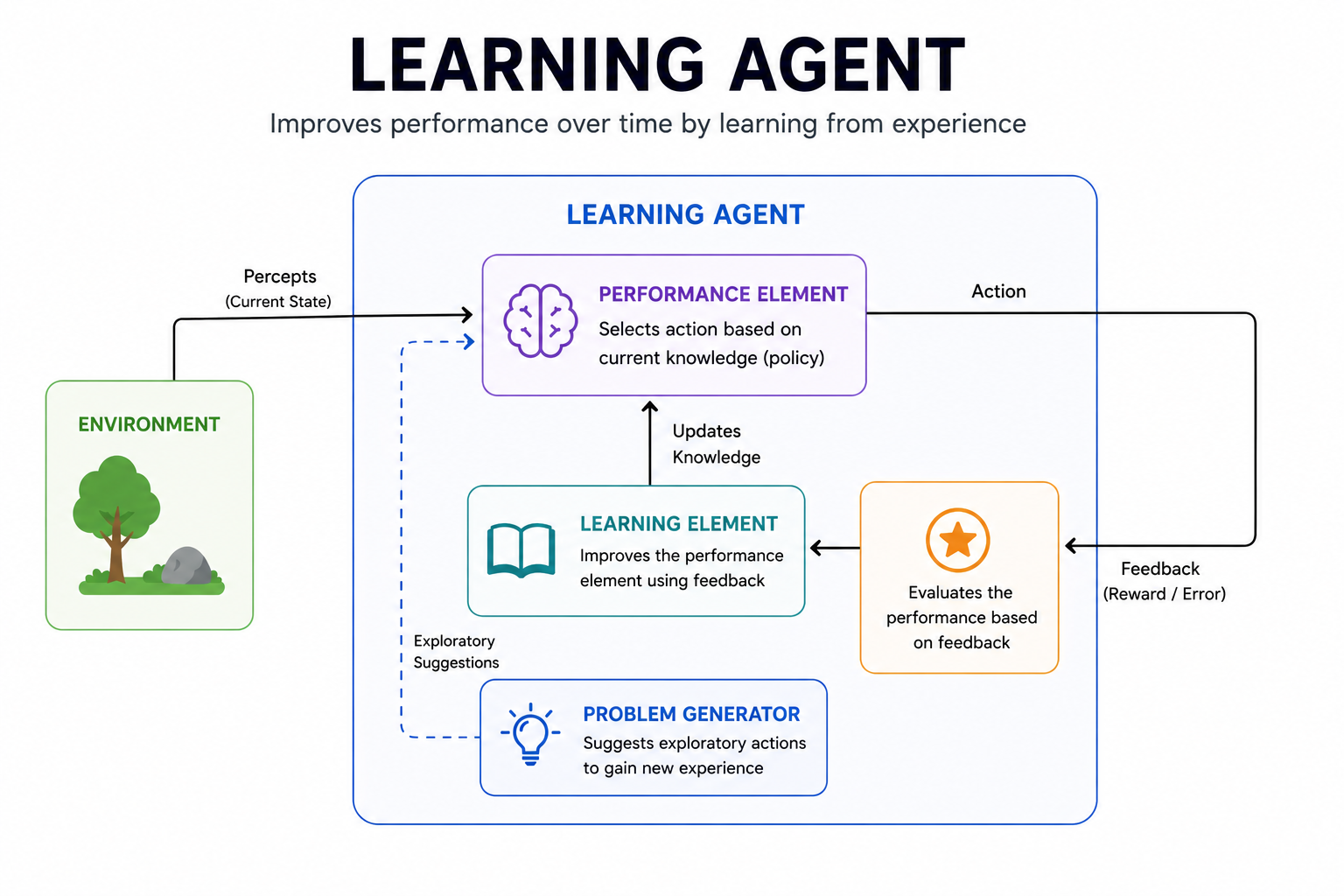

5. Learning Agent

Learning agents improve their performance over time by analyzing feedback and refining their behavior. They are not fully pre-programmed.

They contain four parts: a performance system (which makes decisions), a critic (which evaluates outcomes), a learning component (which updates strategy), and a problem generator.

Key Characteristics

- Adaptive Learning: These agents improve through continuous feedback. Every interaction helps them make better decisions in the future.

- Exploration vs. Exploitation: They balance trying new actions with using strategies that already work. This helps them find better solutions over time.

- Flexibility: Learning agents can adapt to a wide range of tasks and environments. New data shapes their behavior without needing manual reprogramming.

- Generalization: Lessons learned in one situation can be applied to new but similar situations. This improves their overall versatility.

Example of Learning Agent

Customer service chatbots that improve responses over time by learning from past interactions and user feedback.

Strengths

Continuously improves from experience, adapting to new data and handling complex patterns over time.

Best for

Dynamic, changing environments where the right answer evolves over time.

Limitation

Requires large amounts of data and time to train, and can develop bad habits if the feedback it learns from is flawed.

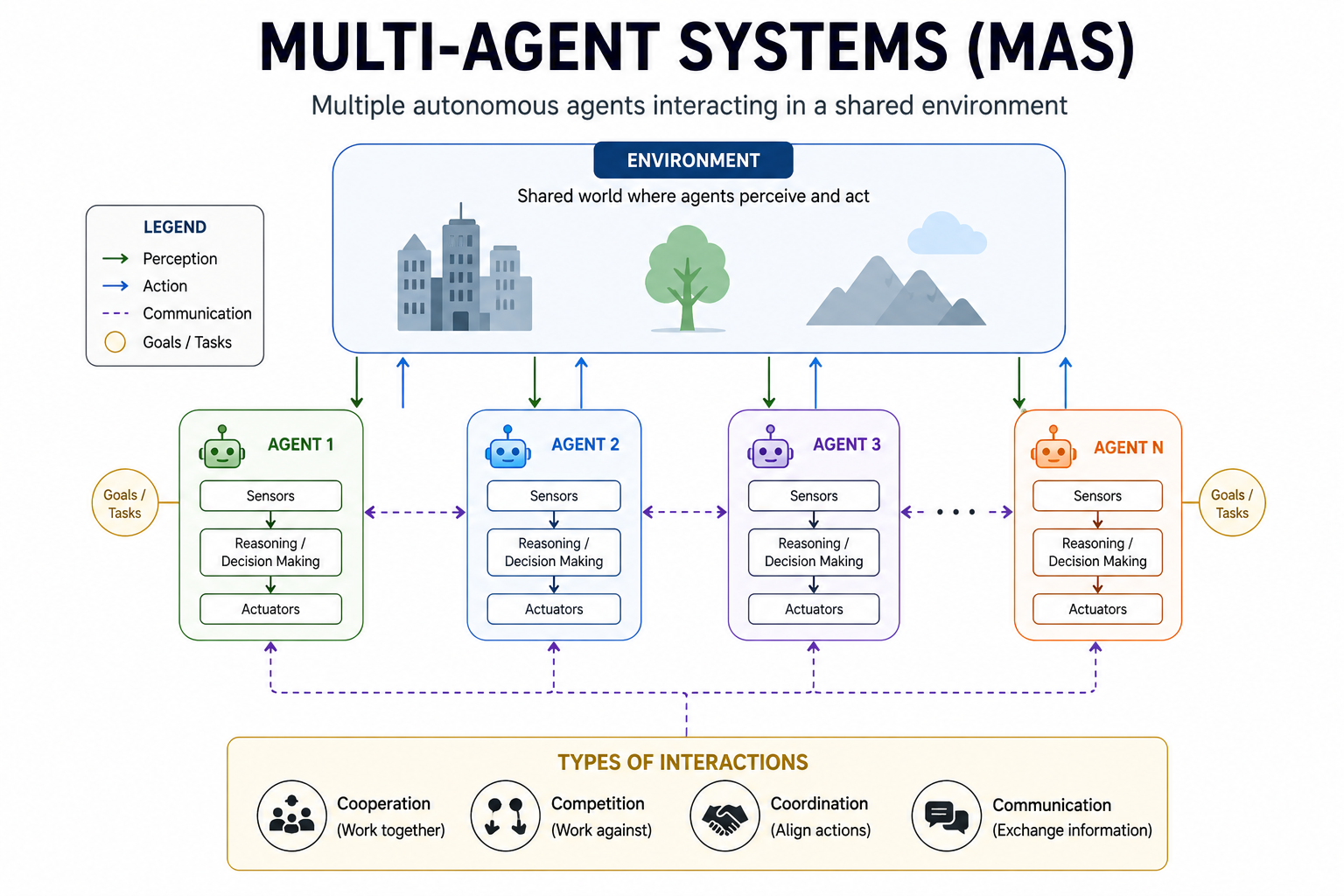

6. Multi-Agent Systems (MAS)

Multi-agent systems are networks of individual AI agents that interact in a shared environment. Each agent acts on its own. Together, they solve problems too large or complex for a single agent.

Agents can cooperate, compete, or do both. There is no central controller. Decisions are distributed across the network. This makes multi-agent systems powerful for large-scale and real-time challenges.

Key Characteristics

- Autonomous Agents: Each agent operates independently, guided by its own goals and the information available to it. No single agent controls the others.

- Interactions: Agents communicate, cooperate, or compete with each other. These interactions help achieve both individual and shared objectives.

- Distributed Problem Solving: Complex tasks are divided among multiple agents. This improves speed and efficiency on large problems.

- Decentralization: There is no central authority making all the decisions. Each agent decides independently, making the system more resilient.

Example of Multi-Agent Systems

Multiplayer game AI where different agents interact, compete, or collaborate to simulate realistic gameplay.

Strengths

Combines multiple agents to solve large-scale problems, offering scalability, resilience, and efficient task distribution.

Best for

Large-scale distributed problems too complex for a single agent.

Limitation

Coordination between agents is complex. Miscommunication or conflicting goals can cause the whole system to behave unpredictably.

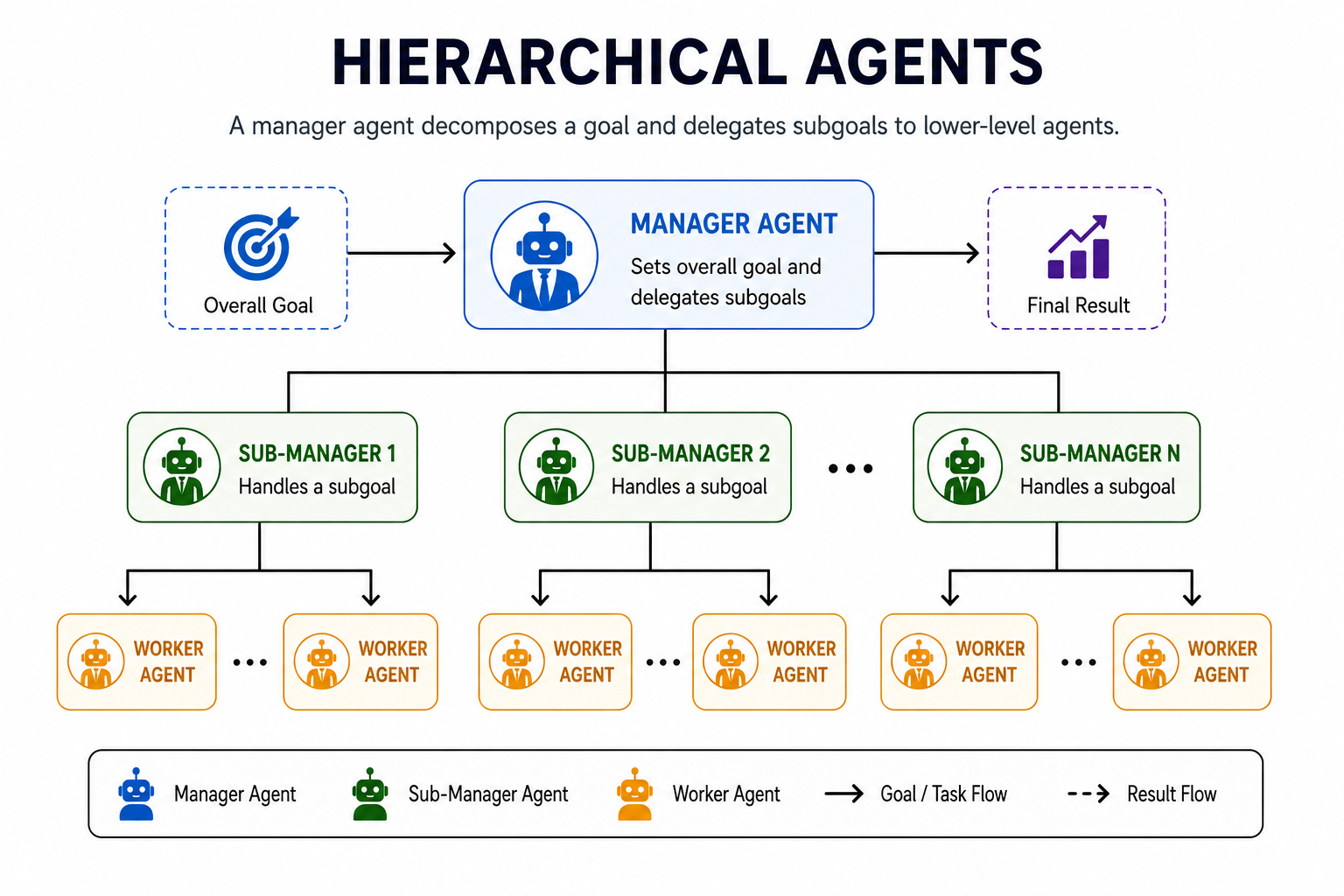

6. Hierarchical Agents

Hierarchical agents are organized in layers. Higher-level agents break big goals into smaller tasks and pass them down. Lower-level agents handle the actual execution.

Each layer only communicates with the one directly above or below it. This structure makes complex, multi-step problems easier to manage. It mirrors how real organizations and teams are structured.

Key Characteristics

- Layered Structure: Agents are arranged in levels. Each level handles a different degree of complexity, from high-level planning to low-level action.

- Task Delegation: Higher agents assign tasks to lower agents. This division of responsibility keeps each agent focused on a specific and manageable role.

- Controlled Communication: Agents only interact with adjacent layers. This reduces confusion and keeps decision-making clean and organized at every level.

- Scalability: New layers or agents can be added without redesigning the whole system. This makes it easy to scale up for larger and more complex tasks.

Example of Hierarchical Agents

Self-driving vehicle systems involve planning, decision-making, and control that are handled across different layers of the system.

Strengths

Breaks complex tasks into structured layers, ensuring clear coordination, scalability, and efficient execution across levels.

Best for

Complex tasks need to be broken into structured, manageable steps across multiple levels of decision-making.

Limitation

If a higher-level agent makes a wrong decision, the error flows down through every layer below it, and the entire chain suffers the consequences.

Quick Comparison of All AI Agent Types

Here’s a side-by-side view of the different types of agents in AI to make choosing easier:

| Agent Type | Memory | Goals | Learns | Complexity | Best Use Case |

| Simple Reflex | No | No | No | Low | Spam filters, alarms |

| Model-Based Reflex | Yes | No | No | Medium | Robot vacuums, self-driving |

| Goal-Based | Yes | Yes | No | Medium-High | Navigation, chess AI |

| Utility-Based | Yes | Yes | No | High | Finance, recommendations |

| Learning Agent | Yes | Yes | Yes | Very High | LLMs, fraud detection |

| Multi-Agent | Varies | Varies | Varies | Highest | Traffic, trading, and drones |

Build Different Types of Agents in AI with SparxIT

Choosing the right AI agent is a business decision. The wrong architecture wastes time, budget, and opportunity. The right one drives real results. As a leading AI Agent development company, we help businesses design, develop, and deploy the right type of AI agent for their specific goals and workflows.

We work across industries including healthcare, finance, logistics, and retail. Stop guessing which agent fits your use case. Start building with confidence and clarity. Connect with us today and turn your AI vision into a working reality.

Partner with Experts

Frequently Asked Questions

How many types of agents are defined in artificial intelligence?

There is no single fixed number, but most standard classifications define 5 core types of AI agents:

- Simple Reflex Agents

- Model-Based Reflex Agents

- Goal-Based Agents

- Utility-Based Agents

- Learning Agents

What types of AI agents exist?

The main types of AI agents include:

- Simple Reflex Agents

- Model-Based Reflex Agents

- Goal-Based Agents

- Utility-Based Agents

- Learning Agents

- Multi-Agent Systems

- Hierarchical Agents

How long does it take to build an AI Agent?

It depends on complexity. A simple rule-based agent can take 2-4 weeks. A fully autonomous learning agent typically takes 3-6months of development, training, and testing.

How much does AI Agent development cost?

Costs vary based on agent type, complexity, data requirements, and team expertise:

- Simple Reflex Agent: $2,000 to $8,000

- Model-Based Reflex Agent: $8,000 to $20,000

- Goal-Based Agent: $20,000 to $40,000

- Utility-Based Agent: $30,000 to $50,000

- Learning Agent: $50,000 to $90,000

- Hierarchical Agent: $90,000 to $200,000

- Multi-Agent System: $100,000 to $300,000+