Artificial intelligence has been around for decades, but nothing quite prepared the world for the arrival of generative AI. In just a few years, generative AI has moved from a research curiosity to a boardroom priority, powering tools that write code, compose music, design drugs, and hold fluent conversations in dozens of languages.

The global generative AI market is set to grow from $22.21 billion in 2025 to $324.68 billion by 2033, at a 40.8% CAGR (Grand View Research). Yet despite this rapid growth, the question “what is Gen AI, really?” still confuses many business leaders and technologists. This guide cuts through the noise.

Inside, you will find a plain-language generative AI definition, a step-by-step explanation of how it works, a breakdown of GenAI types, real-world generative AI examples, and an honest look at both the advantages and the risks.

Whether you are brand new or looking to sharpen your business model, this is the introduction to generative AI you have been waiting for.

What is Generative AI?

Generative AI (often shortened to gen AI or GenAI) is a category of artificial intelligence that can create new content such as text, images, audio, video, code, or synthetic data by learning patterns from large volumes of existing data.

Unlike traditional AI, which is typically trained to classify or predict (e.g., “Is this email spam? Will this customer churn?”), Generative AI produces entirely new outputs that did not exist before.

When you type a prompt into ChatGPT and receive a polished essay, or ask DALL·E for a painting in the style of Van Gogh, you are experiencing generative AI in action.

Generative AI Meaning in Simple Terms

It is a subset of machine learning in which neural network models are trained on massive datasets to generate statistically probable, contextually coherent, and often creative new outputs in response to a user prompt.

Think of generative AI models as an extraordinarily well-read graduate student. They have consumed an enormous library, every book, every research paper, every conversation. When you ask them a question, they do not quote the library verbatim; rather, they synthesize and generate an original response based on everything they have absorbed.

Generative AI Basics

For business decision-makers and newcomers, here is what generative AI means in practical terms. It is a technology that produces human-quality content at machine speed. Generative AI development boils down to three ideas:

(1) It learns from data

(2) It generates new content

(3) It responds to natural-language instructions called prompts.

Introduction to Generative AI: A Brief History

Understanding the introduction to GenAI requires a quick look at its timeline. The idea of machines generating content is not new, but the ability to do so convincingly is very recent.

| Year | Milestone |

| 1950s–1980s | Early rule-based language generators and symbolic AI. Outputs were rigid and brittle |

| 2014 | Ian Goodfellow and colleagues introduce Generative Adversarial Networks (GANs). It was the first deep learning architecture purpose-built for generation |

| 2017 | Google researchers publish the landmark ‘Attention is All You Need‘ paper, introducing the Transformer architecture that underpins today’s LLMs |

| 2018–2020 | OpenAI released GPT-1, GPT-2, and GPT-3, each a leap in language generation quality |

| 2021–2022 | Diffusion models (DALL·E, Stable Diffusion, Midjourney) democratise AI image generation |

| 2022 | ChatGPT launched. 100 million users in 60 days, fastest product in history. Generative AI enters mainstream consciousness |

| 2023–2025 | Multimodal models (GPT-4o, Gemini 1.5, Claude 3), open-source explosion, enterprise integration at scale |

The generative AI fundamentals we rely on today, like transformers, reinforcement learning from human feedback (RLHF), and diffusion processes, were all developed within the last decade. That acceleration is why the technology feels so sudden, even though the groundwork was laid over the course of 70 years of AI research.

How Does Generative AI Work?

How does generative AI function at a technical level? At its core, the process has three phases: training, fine-tuning, and inference (generation). Let’s walk through each in detail.

Phase 1: The Training Phase

During training, generative AI models are exposed to vast datasets. For a language model, this might be hundreds of billions of words drawn from books, websites, code repositories, and scientific papers.

| Quick Fact: In June 2023, just a few months after GPT-4 was released, Geohotz publicly stated that GPT-4 had roughly 1.8 trillion parameters. |

The model adjusts billions of internal parameters (weights) through a mathematical process called backpropagation (the core algorithm used to train artificial neural networks). It helps models learn the statistical relationships between tokens (e.g., words, pixels, audio frames).

The objective is not memorization. The model learns underlying patterns, grammar, reasoning structures, and semantic relationships. It essentially builds an extraordinarily detailed probabilistic reasoning of ‘what tends to follow what‘ across every domain in the training data.

Let’s look at an interactive diagram showing how probabilistic reasoning works in generative AI, from input tokens through model layers to output sampling.

Here’s what the widget is showing you, stage by stage:

| Input sequence: The sentence “the cat sat on the” is split into tokens. The amber token is the one the model is currently reasoning from. Its job is to predict what comes next. Conditional probability: Each time you click a token, the active formula changes. At position 5 (“the”), the model conditions on all four preceding tokens; the full context window collapses into a single probability distribution over the vocabulary. Logits → Softmax: The model produces raw scores (logits) for every candidate word. Softmax converts them into probabilities that sum to 1. Notice how “mat” dominates after “the cat sat on the”; the training data carved a very steep peak there. Temperature: This is where generative AI gets interesting. Dividing logits by temperature before softmax reshapes the distribution.

Every time you generate text, the model is essentially rolling a weighted die shaped by temperature. |

For text models, a technique called self-supervised learning (an ML approach in which models learn from vast amounts of unlabeled data by automatically generating their own labels) is used. In this, the model is given sentences with certain words masked and must predict the missing tokens.

In image models, diffusion training progressively adds noise to images in a step-by-step manner until the original is reduced to pure static. The model is then trained to reverse that process, learning to peel back the noise and reconstruct the original with remarkable precision. Each denoising step is a small probabilistic decision: given what the image looks like now, what should it look like one step cleaner? Repeated hundreds of times.

Phase 2: Fine-Tuning and Alignment

Once pre-training is complete, the raw model is powerful but unpolished. It has learned the statistical structure of language, but does not yet know how to be helpful, honest, or safe in conversation. This is where fine-tuning comes in.

Fine-tuning exposes the pre-trained model to a much smaller, curated dataset, typically thousands of high-quality examples. These teach the model a specific behavior: how to follow instructions, adopt a particular format, or operate within a defined domain such as medicine or law.

Reinforcement Learning from Human Feedback (RLHF)

Instruction fine-tuning alone is not enough to make a model reliably aligned with human values. This is where RLHF becomes critical. It works in three steps:

- Human raters rank multiple model outputs for the same prompt from best to worst.

- A separate neural network, the reward model, is then trained on these rankings, learning to simulate human judgment at scale.

- Finally, the main model is updated using a reinforcement learning algorithm (most commonly Proximal Policy Optimization). It rewards responses that score highly and penalizes those that do not.

The result is a model steered toward being helpful, harmless, and honest and it is the core technique behind conversational AI systems like ChatGPT, Claude, and Gemini.

Phase 3: The Inference / Generation Phase

Once trained, the model enters inference mode. This is what happens every time you type a prompt. The model takes your input and generates an output token by token (for text) or pixel by pixel (for images), sampling from its learned probability distributions.

This is why generative AI outputs are probabilistic rather than deterministic. Ask the same question twice, and you may get a slightly different answer. The model is not retrieving a stored response; it is generating a new one each time by predicting the most contextually appropriate sequence.

Modern systems add additional layers:

- Retrieval-Augmented Generation (RAG) development connects the model to live databases for up-to-date answers

- Fine-tuning adapts a base model to a specific domain

- AI agents chain multiple model calls together to complete multi-step tasks autonomously.

Types of Generative AI

There is no single architecture behind all generative AI. Understanding the Generative AI types helps you choose the right tool for your business use case.

-

Large Language Models (LLMs)

LLMs such as GPT-4o (OpenAI), Claude 3 (Anthropic), Gemini 1.5 (Google), and Llama 3 (Meta) are transformer-based autoregressive models that generate text by predicting the next token. They power AI chatbots, code assistants, summarisation engines, and document drafters. Gen AI models in this category are the most widely deployed in enterprise settings.

-

Diffusion Models

Diffusion models, including Stable Diffusion, DALL·E 3, and Midjourney, generate images by gradually denoising random Gaussian noise (start with a completely random, static-like image, just dots, no meaning) guided by a text prompt. The same principle applies to audio generation tools like Suno. These models are dominant for visual content creation and design ideation.

-

Multimodal & Emerging Generative AI Models

The frontier of generative AI technology is multimodal. Models that handle text, images, audio, and video simultaneously. GPT-4o can see, hear, and speak. Google’s Veo 2 generates high-definition video from text descriptions. This shift is making it harder to distinguish between standalone GenAI models and end-to-end AI development that handles the entire creative process.

Other notable architectures include Variational Autoencoders (VAEs) for controllable generation, and Generative Adversarial Networks (GANs), now mostly superseded for images. However, it is still used in synthetic data generation and video game assets.

| Model Type | Output | Examples | Best For |

| LLMs (Transformers) | Text, Code | GPT-4o, Claude 3, Gemini | Chatbots, writing, coding, Q&A |

| Diffusion Models | Images, Audio | DALL·E 3, Stable Diffusion, Suno | Design, art generation, marketing visuals |

| GANs | Images, Synthetic Data | StyleGAN, CycleGAN | Data augmentation, game assets |

| VAEs | Images, Latent Interpolation | VAE-GAN hybrids | Style transfer, controlled generation |

| Multimodal Models | Text + Image + Audio + Video | GPT-4o, Gemini 1.5, Claude 3 | End-to-end creative workflows |

| Video Generation | Video Clips | Sora, Veo 2, Runway Gen-3 | Marketing, film, simulation |

Generative AI Examples Across Industries

The most compelling examples of generative AI are not hypothetical; they are happening right now across every major sector. Here are seven industry verticals where gen AI is already delivering measurable value.

| Industry | Use Case | Real-World Example |

| Healthcare | GenAI is used in drug discovery and clinical note generation | Insilico Medicine used GenAI to design a novel fibrosis drug candidate, reducing discovery time from years to 18 months |

| Financial Services | Fraud detection, personalized advice, and report drafting | Morgan Stanley deployed GPT-4 to help 16,000 financial advisors surface insights from 100,000+ research documents instantly |

| Retail & E-Commerce | Product descriptions, personalized recommendations, and visual search | Amazon uses genAI in eCommerce to generate, summarize, and optimize millions of product listings. |

| Legal | Contract review, document summarisation, and legal research | Harvey AI (built on GPT-4) is used by A&O Shearman to review and draft contracts 4× faster than manual processes |

| Education | Personalized tutoring, content creation, and assessment generation | Khan Academy’s Khanmigo uses generative AI in education to provide Socratic tutoring to millions of students. |

| Software Development | Code generation, debugging, and documentation | GitHub Copilot (powered by OpenAI Codex) is used by 1.3 million developers. Studies show 55% faster task completion |

| Media & Marketing | Ad copy, video scripts, and visual asset creation | Coca-Cola used DALL·E and GPT-4 to create the ‘Create Real Magic’ campaign, allowing fans to co-create brand art at scale |

Advantages of Generative AI

The benefits of generative AI go beyond novelty. Here is why organizations across industries are investing billions in adopting gen AI.

-

Unprecedented Productivity Gains

McKinsey estimates that generative AI could automate 60–70% of employee work activities in knowledge work roles, adding $2.6-$4.4 trillion in annual economic value. Copilot-style tools have been shown to reduce time-on-task for writing, coding, and research.

-

Personalization at Scale

Before gen AI, personalizing content for millions of customers required enormous human resources. Generative AI technology enables dynamic personalization, unique product descriptions, tailored emails, and individualized learning paths. It is generated on demand without human effort per unit.

-

Accelerated Research and Innovation

In pharmaceutical research, generative AI compresses decades-long drug discovery timelines. In materials science, models like Google DeepMind’s AlphaFold 3 have predicted the structures of over 200 million proteins, more than all previous human scientific effort combined.

-

Democratization of Creation

Generative AI for enterprises removes technical barriers. A business owner with no design skills can create professional marketing visuals. A solo developer can build full-stack applications with AI-assisted code generation. The benefits of generative artificial intelligence are not reserved for large enterprises; they scale down to individuals.

-

Cost Reduction in Content Operations

Enterprise teams report 40–70% reductions in content production costs when deploying gen AI for first drafts, translations, and format adaptations. A blog post that took 4 hours now takes 45 minutes with AI assistance and human review.

Limitations, Risks & Ethical Considerations of Generative AI

A complete picture of generative AI tech tack requires an honest discussion of its limitations. These are not reasons to avoid gen AI, but they are the factors every decision maker must understand before deploying it.

| Risk | What It Means | Mitigation |

| Hallucinations | Models generate plausible-sounding but factually incorrect information with full confidence | RAG pipelines, human review, grounding with verified sources |

| Bias & Fairness | Training data reflects historical biases; models can perpetuate gender, racial, or cultural stereotypes | Diverse training data, bias audits, and constitutional AI techniques |

| IP & Copyright Issues | Models trained on web data may reproduce copyrighted content; ownership of AI-generated work is legally unclear | Clear usage policies, IP indemnity clauses (offered by some vendors), and human editorial oversight |

| Data Privacy | Sensitive data entered into public AI tools may be used for model training or exposed | Use private/enterprise deployments, data processing agreements, and local model hosting |

| Environmental Cost | Training large models consumes significant compute and energy (GPT-3 training ≈ 552 tCO2e) | Model efficiency improvements; green data centers; use pre-trained models rather than training from scratch |

| Misinformation & Deepfakes | Gen AI enables mass production of synthetic media like audio, video, and images that can deceive at scale | Watermarking (C2PA standard), media literacy, detection tools (e.g., Google SynthID) |

How Enterprises Can Adopt Generative AI Technology

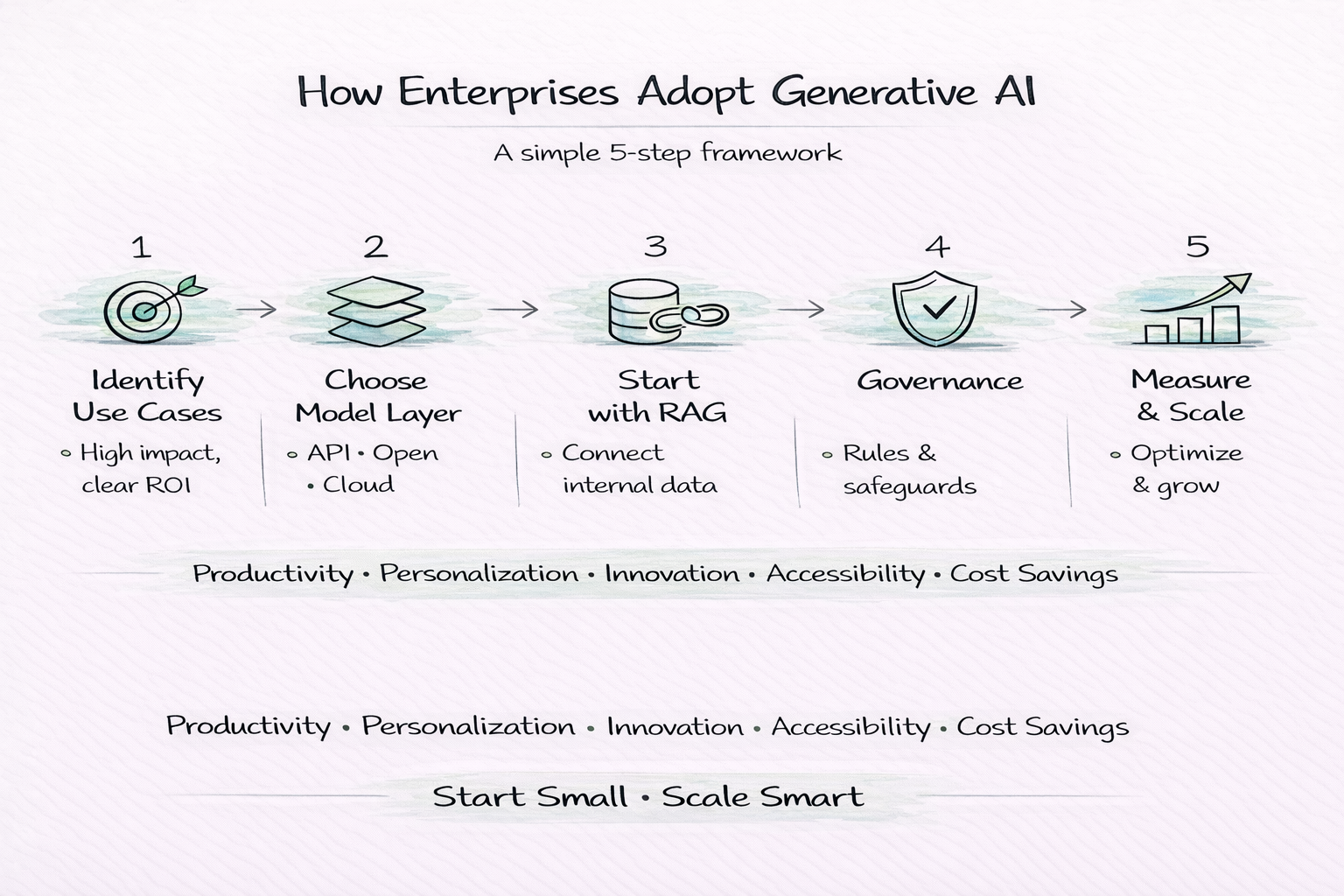

Understanding generative AI technology is one thing; deploying it effectively is another. Here is a practical five-step framework for organizations beginning or scaling their gen AI journey.

Step 1: Identify High-Value Use Cases

Start with problems where gen AI’s strengths align, such as repetitive knowledge work, content creation, customer query handling, and data synthesis. Prioritize use cases with clear ROI and low risk.

Step 2: Choose the Right Model Layer

Decide between proprietary APIs (OpenAI, Anthropic, Google), open-source models (Llama, Mistral), or cloud-hosted foundation models (AWS Bedrock, Azure OpenAI, Google Vertex AI). Each involves different trade-offs in terms of cost, data privacy, customisability, and performance.

Step 3: Start with Retrieval-Augmented Generation (RAG)

For most enterprise deployments, connecting a language model to your internal knowledge base delivers the best accuracy-to-cost ratio without the expense and complexity of full fine-tuning.

Step 4: Establish Governance & AI Policies

Define acceptable use policies, data classification rules, output review workflows, and human-on-the-loop checkpoints before broad deployment. Responsible AI governance is a legal and reputational necessity.

Step 5: Measure, Iterate & Scale

Define success metrics upfront, such as time saved, cost per output, quality scores, and user satisfaction. Run controlled pilots, measure rigorously, and scale only what demonstrably delivers value.

Conclusion

Generative AI is a genuine capability shift, not just hype. It’s already changing how teams write, build, research, and create. But it works best when humans stay in the loop, applying the judgment and nuance that models still lack.

The organizations seeing real results aren’t deploying it everywhere; they’re starting small, measuring honestly, and scaling what works. If you’re just beginning, pick one time-consuming task, try a tool for a month, and go from there. It’s not magic. Generative AI a powerful tool that rewards thoughtful use. And if you have any concerns or need more clarity on Generative AI vs AI Agents vs Agentic AI, you can consult an AI services provider.

How Can SparxIT Help You Build an Innovative Generative AI Solution

Building a generative AI solution is more than plugging into an API; it requires the right architecture, responsible design, and a team that understands both the technology and your business goals. That’s where SparxIT comes in.

As a leading Generative AI development company, we bring hands-on expertise across the full generative AI stack. From LLM fine-tuning and RAG pipelines to custom chatbot development, multimodal AI apps, and enterprise AI integration, SparxIT covers the entire generative AI development lifecycle under one roof.

Whether you’re looking to automate content workflows, build an intelligent customer support system, or embed gen AI into your existing product, we design solutions that are scalable, secure, and built for real-world performance.

With a proven track record across industries, we don’t just deliver code, we deliver outcomes. Let’s build something that actually works for your business.

Partner with Experts

Frequently Asked Questions

What is GenAI vs regular AI?

Regular AI (discriminative AI) classifies or predicts based on existing data (e.g., 'Is this a cat?'). GenAI creates new data (e.g., 'Draw me a picture of a cat in space'). They use overlapping techniques but serve fundamentally different goals.

Is generative AI the same as ChatGPT?

No. ChatGPT is a product built on top of generative AI (specifically OpenAI's GPT-4 family). Generative AI is the broader technology category. ChatGPT is one application of it, much like how 'search engine' is a technology category and Google is one product.

What are the most popular generative AI models today?

The most widely used generative AI models include GPT-4o (OpenAI), Claude 3 Opus/Sonnet (Anthropic), Gemini 1.5 Pro (Google), Llama 3 (Meta), Stable Diffusion XL (image), and Sora (video). Each excels in different modalities and use cases.

What are the main risks of generative AI?

The primary risks are hallucinations (factually incorrect outputs), bias, IP/copyright uncertainty, data privacy exposure, and potential misuse for generating misinformation. Most risks are manageable with proper governance, human oversight, and vendor-level protections.

How long does it take to build a generative AI product?

It depends on the scope. A simple AI-powered feature using an existing API (like OpenAI or Claude) can go live in days to weeks. A fully custom fine-tuned model with enterprise integrations typically takes 3 to 6 months. Training a foundation model from scratch takes 6 months to around 2 years.

How can small businesses use generative AI?

SMBs can start with off-the-shelf tools that require zero technical expertise. For example, ChatGPT or Claude for writing and research, Canva AI for design, GitHub Copilot for code, and Otter.ai for meeting transcription. The key is to identify one high-frequency, time-consuming task and automate it first.